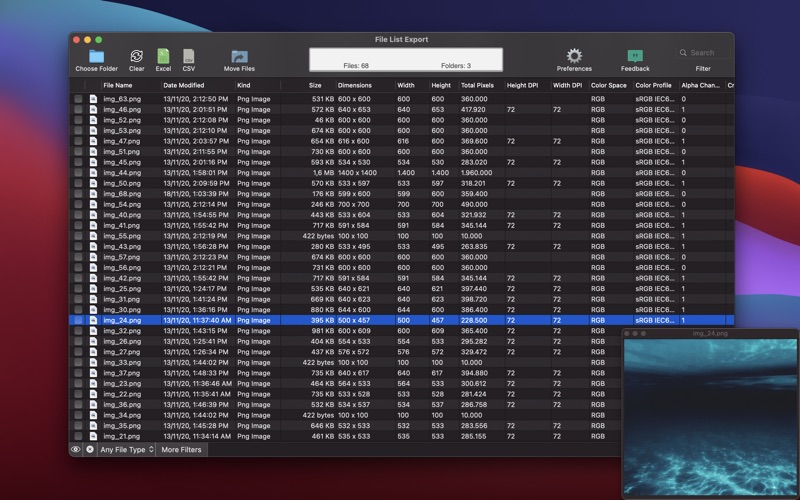

The tool can be downloaded below for free, it is a zip file containing a single exe file for installation. Version 4.1.8: 2018/10/24:. Another problem with estimating addresses of flights in list solved. File List Export 2.4.0 An easy to use application that will help you create list of files for any need. List all your photos, all your videos or all your files. If you need to create list of files this app is for you.

- File List Export 2 4 0 Free Online

- File List Export 2 4 0 Free Trial

- File List Export 2 4 0 Free Software

- File List Export 2 4 0 Free Barefoot

- File List Export 2 4 0 Free Download

- 2 Mod 4

- File List Export 2 4 0 Free Server Hosting Minecraft

One of the most frequently required features when implementing scrapers isbeing able to store the scraped data properly and, quite often, that meansgenerating an “export file” with the scraped data (commonly called “exportfeed”) to be consumed by other systems.

- Fast Duplicate File Finder 6.0.0.2 Locate and remove duplicate files to free up space using advanced filters and three file compariso. Oct 2nd 2021, 16:35 GMT.

- File List Export 2 4 0 Pdf Free Exports items in JSON format to the specified file-like object, writing oneJSON-encoded item per line. The additional init method arguments are passedto the BaseItemExporter init method, and the leftover arguments tothe JSONEncoder init method, so you can use any JSONEncoder init method argument.

- File List Export 2 4 0 44 Every file system being exported to remote users via NFS, as well as the access level for those file systems, are listed in the /etc/exports file. When the nfs service starts, the /usr/sbin/exportfs command launches and reads this file, passes control to rpc.mountd (if NFSv2 or NFSv3) for the actual mounting process.

Scrapy provides this functionality out of the box with the Feed Exports, whichallows you to generate feeds with the scraped items, using multipleserialization formats and storage backends.

Serialization formats¶

For serializing the scraped data, the feed exports use the . These formats are supported out of the box:

But you can also extend the supported format through the setting.

JSON¶

- Value for the

formatkey in the setting:json - Exporter used:

JsonItemExporter - See if you’re using JSON withlarge feeds.

JSON lines¶

- Value for the

formatkey in the setting:jsonlines - Exporter used:

JsonLinesItemExporter

CSV¶

- Value for the

formatkey in the setting:csv - Exporter used:

CsvItemExporter - To specify columns to export and their order use. Other feed exporters can also use thisoption, but it is important for CSV because unlike many other exportformats CSV uses a fixed header.

XML¶

- Value for the

formatkey in the setting:xml - Exporter used:

XmlItemExporter

Pickle¶

- Value for the

formatkey in the setting:pickle - Exporter used:

PickleItemExporter

Marshal¶

- Value for the

formatkey in the setting:marshal - Exporter used:

MarshalItemExporter

Storages¶

When using the feed exports you define where to store the feed using one or multiple URIs(through the setting). The feed exports supports multiplestorage backend types which are defined by the URI scheme.

The storages backends supported out of the box are:

- (requires botocore)

- (requires google-cloud-storage)

Some storage backends may be unavailable if the required external libraries arenot available. For example, the S3 backend is only available if the botocorelibrary is installed.

Storage URI parameters¶

The storage URI can also contain parameters that get replaced when the feed isbeing created. These parameters are:

%(time)s- gets replaced by a timestamp when the feed is being created%(name)s- gets replaced by the spider name

Any other named parameter gets replaced by the spider attribute of the samename. For example,

%(site_id)s would get replaced by the spider.site_idattribute the moment the feed is being created.Here are some examples to illustrate:

- Store in FTP using one directory per spider:

ftp://user:[email protected]/scraping/feeds/%(name)s/%(time)s.json

- Store in S3 using one directory per spider:

s3://mybucket/scraping/feeds/%(name)s/%(time)s.json

Storage backends¶

Local filesystem¶

The feeds are stored in the local filesystem.

- URI scheme:

file - Example URI:

file:///tmp/export.csv - Required external libraries: none

Note that for the local filesystem storage (only) you can omit the scheme ifyou specify an absolute path like

/tmp/export.csv. This only works on Unixsystems though.FTP¶

The feeds are stored in a FTP server.

- URI scheme:

ftp - Example URI:

ftp://user:[email protected]/path/to/export.csv - Required external libraries: none

FTP supports two different connection modes: active or passive. Scrapy uses the passive connectionmode by default. To use the active connection mode instead, set the setting to

True.This storage backend uses .

S3¶

The feeds are stored on Amazon S3.

- URI scheme:

s3 - Example URIs:

s3://mybucket/path/to/export.csvs3://aws_key:aws_secret@mybucket/path/to/export.csv

- Required external libraries: botocore >= 1.4.87

The AWS credentials can be passed as user/password in the URI, or they can bepassed through the following settings:

You can also define a custom ACL for exported feeds using this setting:

This storage backend uses .

Google Cloud Storage (GCS)¶

The feeds are stored on Google Cloud Storage.

- URI scheme:

gs - Example URIs:

gs://mybucket/path/to/export.csv

- Required external libraries: google-cloud-storage.

For more information about authentication, please refer to Google Cloud documentation.

You can set a Project ID and Access Control List (ACL) through the following settings:

This storage backend uses .

Standard output¶

The feeds are written to the standard output of the Scrapy process.

- URI scheme:

stdout - Example URI:

stdout: - Required external libraries: none

Delayed file delivery¶

As indicated above, some of the described storage backends use delayed filedelivery.

These storage backends do not upload items to the feed URI as those items arescraped. Instead, Scrapy writes items into a temporary local file, and onlyonce all the file contents have been written (i.e. at the end of the crawl) isthat file uploaded to the feed URI.

If you want item delivery to start earlier when using one of these storagebackends, use to split the output itemsin multiple files, with the specified maximum item count per file. That way, assoon as a file reaches the maximum item count, that file is delivered to thefeed URI, allowing item delivery to start way before the end of the crawl.

Settings¶

These are the settings used for configuring the feed exports:

- (mandatory)

FEEDS¶

New in version 2.1.

Default:

{}A dictionary in which every key is a feed URI (or a

pathlib.Pathobject) and each value is a nested dictionary containing configurationparameters for the specific feed.This setting is required for enabling the feed export feature.

See for supported URI schemes.

For instance:

Deskcover 1 2 1. The following is a list of the accepted keys and the setting that is usedas a fallback value if that key is not provided for a specific feed definition:

format: the .This setting is mandatory, there is no fallback value.batch_item_count: falls back to.New in version 2.3.0.encoding: falls back to .fields: falls back to .indent: falls back to .item_export_kwargs:dictwith keyword arguments for the corresponding .overwrite: whether to overwrite the file if it already exists(True) or append to its content (False).The default value depends on the :- :

False - :

TrueNoteSome FTP servers may not support appending to files (theAPPEFTP command). - :

True(appending is not supported) - :

False(overwriting is not supported)

store_empty: falls back to .uri_params: falls back to .

FEED_EXPORT_ENCODING¶

Default:

NoneThe encoding to be used for the feed.

If unset or set to

None (default) it uses UTF-8 for everything except JSON output,which uses safe numeric encoding (uXXXX sequences) for historic reasons.Use

utf-8 if you want UTF-8 for JSON too.FEED_EXPORT_FIELDS¶

Default:

NoneA list of fields to export, optional.Example:

FEED_EXPORT_FIELDS=['foo','bar','baz'].Use FEED_EXPORT_FIELDS option to define fields to export and their order.

When FEED_EXPORT_FIELDS is empty or None (default), Scrapy uses the fieldsdefined in yielded by your spider.

If an exporter requires a fixed set of fields (this is the case for export format) and FEED_EXPORT_FIELDSis empty or None, then Scrapy tries to infer field names from theexported data - currently it uses field names from the first item.

FEED_EXPORT_INDENT¶

Default:

0Amount of spaces used to indent the output on each level. If

FEED_EXPORT_INDENTis a non-negative integer, then array elements and object members will be pretty-printedwith that indent level. An indent level of 0 (the default), or negative,will put each item on a new line. None selects the most compact representation.Currently implemented only by

JsonItemExporterand XmlItemExporter, i.e. when you are exportingto .json or .xml.FEED_STORE_EMPTY¶

Default:

FalseFile List Export 2 4 0 Free Online

Whether to export empty feeds (i.e. feeds with no items).

FEED_STORAGES¶

Default:

{}A dict containing additional feed storage backends supported by your project.The keys are URI schemes and the values are paths to storage classes.

FEED_STORAGE_FTP_ACTIVE¶

Default:

FalseWhether to use the active connection mode when exporting feeds to an FTP server(

True) or use the passive connection mode instead (False, default).For information about FTP connection modes, see What is the difference betweenactive and passive FTP?.

FEED_STORAGE_S3_ACL¶

Default:

' (empty string)A string containing a custom ACL for feeds exported to Amazon S3 by your project.

For a complete list of available values, access the Canned ACL section on Amazon S3 docs.

FEED_STORAGES_BASE¶

Default:

A dict containing the built-in feed storage backends supported by Scrapy. Youcan disable any of these backends by assigning

None to their URI scheme in. E.g., to disable the built-in FTP storage backend(without replacement), place this in your settings.py:FEED_EXPORTERS¶

Default:

{}A dict containing additional exporters supported by your project. The keys areserialization formats and the values are paths to classes.

FEED_EXPORTERS_BASE¶

File List Export 2 4 0 Free Trial

Default:

A dict containing the built-in feed exporters supported by Scrapy. You candisable any of these exporters by assigning

None to their serializationformat in . E.g., to disable the built-in CSV exporter(without replacement), place this in your settings.py:FEED_EXPORT_BATCH_ITEM_COUNT¶

New in version 2.3.0.

Default:

0If assigned an integer number higher than

0, Scrapy generates multiple output filesstoring up to the specified number of items in each output file.When generating multiple output files, you must use at least one of the followingplaceholders in the feed URI to indicate how the different output file names aregenerated:

%(batch_time)s- gets replaced by a timestamp when the feed is being created(e.g.2020-03-28T14-45-08.237134)%(batch_id)d- gets replaced by the 1-based sequence number of the batch.Use printf-style string formatting toalter the number format. For example, to make the batch ID a 5-digitnumber by introducing leading zeroes as needed, use%(batch_id)05d(e.g.3becomes00003,123becomes00123).

File List Export 2 4 0 Free Software

For instance, if your settings include:

And your command line is:

The command line above can generate a directory tree like:

Where the first and second files contain exactly 100 items. The last one contains100 items or fewer.

File List Export 2 4 0 Free Barefoot

FEED_URI_PARAMS¶

Default:

NoneA string with the import path of a function to set the parameters to apply withprintf-style string formatting to thefeed URI.

The function signature should be as follows:

Return a

dict of key-value pairs to apply to the feed URI usingprintf-style string formatting.- params (dict) –default key-value pairsSpecifically:

batch_id: ID of the file batch. See.If is0,batch_idis always1.New in version 2.3.0.batch_time: UTC date and time, in ISO format with:replaced with-.See .time:batch_time, with microseconds set to0.

- spider (scrapy.spiders.Spider) – source spider of the feed items

For example, to include the

name of thesource spider in the feed URI:- Define the following function somewhere in your project:

- Point to that function in your settings:

- Use

%(spider_name)sin your feed URI:

File List Export 2 4 0 Free Download

-->Specifies the Excel (.xlsx) Extensions to the Office OpenXML SpreadsheetML File Format, which are extensions to the Office Open XML fileformats as described in [ISO/IEC-29500-1]. Smoothscroll 1 1 6. The extensions are specified usingconventions provided by the Office Open XML file formats as described in[ISO/IEC-29500-3].

This page and associated content may beupdated frequently. We recommend you subscribe to the RSSfeed to receive update notifications.

Published Version

Date | Protocol Revision | Revision Class | Downloads |

|---|---|---|---|

8/17/2021 | 22.0 | Major | PDF | DOCX |

Previous Versions

Date | Protocol Revision | Revision Class | Downloads |

|---|---|---|---|

4/22/2021 | 21.0 | Major | PDF | DOCX |

10/15/2020 | 20.0 | Major | PDF | DOCX |

2/19/2020 | 19.0 | Major | PDF | DOCX |

11/19/2019 | 18.0 | Major | PDF | DOCX |

3/19/2019 | 17.1 | Minor | PDF | DOCX |

1/11/2019 | 17.0 | Major | PDF | DOCX |

12/11/2018 | 16.0 | Major | PDF | DOCX |

10/10/2018 | 15.0 | Major | PDF | DOCX |

8/28/2018 | 14.0 | Major | |

8/1/2018 | 13.0 | Major | PDF | DOCX |

6/8/2018 | 12.0 | Major | PDF | DOCX |

5/9/2018 | 11.0 | Major | PDF | DOCX |

4/27/2018 | 10.0 | Major | PDF | DOCX |

12/12/2017 | 9.0 | Major | PDF | DOCX |

6/20/2017 | 8.0 | None | PDF | DOCX |

1/18/2017 | 8.0 | Major | PDF | DOCX |

11/14/2016 | 7.1 | None | PDF | DOCX |

9/29/2016 | 7.1 | Minor | PDF | DOCX |

9/4/2015 | 7.0 | Major | PDF | DOCX |

3/16/2015 | 6.0 | Major | PDF | DOCX |

10/30/2014 | 5.1 | Minor | PDF | DOCX |

7/31/2014 | 5.0 | Major | PDF | DOCX |

4/30/2014 | 4.3 | Minor | PDF | DOCX |

2/10/2014 | 4.2 | None | PDF | DOCX |

11/18/2013 | 4.2 | Minor | PDF | DOCX |

7/30/2013 | 4.1 | None | PDF | DOCX |

2/11/2013 | 4.1 | Minor | PDF | DOCX |

10/8/2012 | 4.0 | Major | |

7/16/2012 | 3.0 | Major | |

4/11/2012 | 2.0 | None | |

1/20/2012 | 2.0 | Major | |

6/10/2011 | 1.5 | None | |

3/18/2011 | 1.5 | Minor | |

12/17/2010 | 1.04 | None | |

11/15/2010 | 1.04 | None | |

9/27/2010 | 1.04 | None | |

7/23/2010 | 1.04 | None | |

6/29/2010 | 1.04 | Editorial | |

6/7/2010 | 1.03 | Editorial | |

4/30/2010 | 1.02 | Editorial | |

3/31/2010 | 1.01 | Editorial | |

2/19/2010 | 1.0 | Major | |

11/6/2009 | 0.3 | Editorial | |

8/28/2009 | 0.2 | Editorial | |

7/13/2009 | 0.1 | Major |

Preview Versions

From time to time, Microsoft maypublish a preview, or pre-release, version of an Open Specifications technicaldocument for community review and feedback. To submit feedback for a previewversion of a technical document, please follow any instructions specified forthat document. If no instructions are indicated for the document, pleaseprovide feedback by using the Open Specification Forums.

The preview period for a technical document varies.Additionally, not every technical document will be published for preview.

A preview version of this document may beavailable on the Word,Excel, and PowerPoint Standards Support page. After the previewperiod, the most current version of the document is available on this page.

Development Resources

Findresources for creating interoperable solutions for Microsoft software,services, hardware, and non-Microsoft products:

Plugfestsand Events, Test Tools,DevelopmentSupport, and Open SpecificationsDev Center.

Intellectual Property Rights Notice for Open Specifications Documentation

- Technical Documentation. Microsoft publishes OpenSpecifications documentation (“this documentation”) for protocols, fileformats, data portability, computer languages, and standards support.Additionally, overview documents cover inter-protocol relationships andinteractions.

- Copyrights. This documentation is covered by Microsoftcopyrights. Regardless of any other terms that are contained in the terms ofuse for the Microsoft website that hosts this documentation, you can makecopies of it in order to develop implementations of the technologies that aredescribed in this documentation and can distribute portions of it in yourimplementations that use these technologies or in your documentation asnecessary to properly document the implementation. You can also distribute inyour implementation, with or without modification, any schemas, IDLs, or codesamples that are included in the documentation. This permission also applies toany documents that are referenced in the Open Specifications documentation.

- No Trade Secrets. Microsoft does not claim any tradesecret rights in this documentation.

- Patents. Microsoft has patents that might cover yourimplementations of the technologies described in the Open Specificationsdocumentation. Neither this notice nor Microsoft's delivery of thisdocumentation grants any licenses under those patents or any other Microsoftpatents. However, a given Open Specifications document might be covered by theMicrosoft Open Specifications Promiseor the Microsoft CommunityPromise. If you would prefer a written license, or if thetechnologies described in this documentation are not covered by the OpenSpecifications Promise or Community Promise, as applicable, patent licenses areavailable by contacting [email protected].

- License Programs. To see all of the protocols in scopeunder a specific license program and the associated patents, visit the Patent Map.

- Trademarks. The names of companies and products containedin this documentation might be covered by trademarks or similar intellectualproperty rights. This notice does not grant any licenses under those rights.For a list of Microsoft trademarks, visit www.microsoft.com/trademarks.

- Fictitious Names. The example companies, organizations,products, domain names, email addresses, logos, people, places, and events thatare depicted in this documentation are fictitious. No association with any realcompany, organization, product, domain name, email address, logo, person,place, or event is intended or should be inferred.

Reservation of Rights. All otherrights are reserved, and this notice does not grant any rights other than asspecifically described above, whether by implication, estoppel, or otherwise.

2 Mod 4

Tools.The Open Specifications documentation does not require the use of Microsoftprogramming tools or programming environments in order for you to develop animplementation. If you have access to Microsoft programming tools andenvironments, you are free to take advantage of them. Certain OpenSpecifications documents are intended for use in conjunction with publiclyavailable standards specifications and network programming art and, as such,assume that the reader either is familiar with the aforementioned material orhas immediate access to it.

File List Export 2 4 0 Free Server Hosting Minecraft

Support.For questions and support, please contact [email protected].